Wat is een Robots.txt-dossier?

Een Robots.txt-bestand is een tekstbestand dat wordt gebruikt om te communiceren met webcrawlers en andere geautomatiseerde agenten over welke pagina's van je kennisbank niet geïndexeerd mogen worden. Het bevat regels die specificeren welke pagina's door welke crawlers toegankelijk mogen zijn.

OPMERKING

Voor meer informatie, lees dit helpartikel van Google.

Toegang tot Robots.txt in Document360

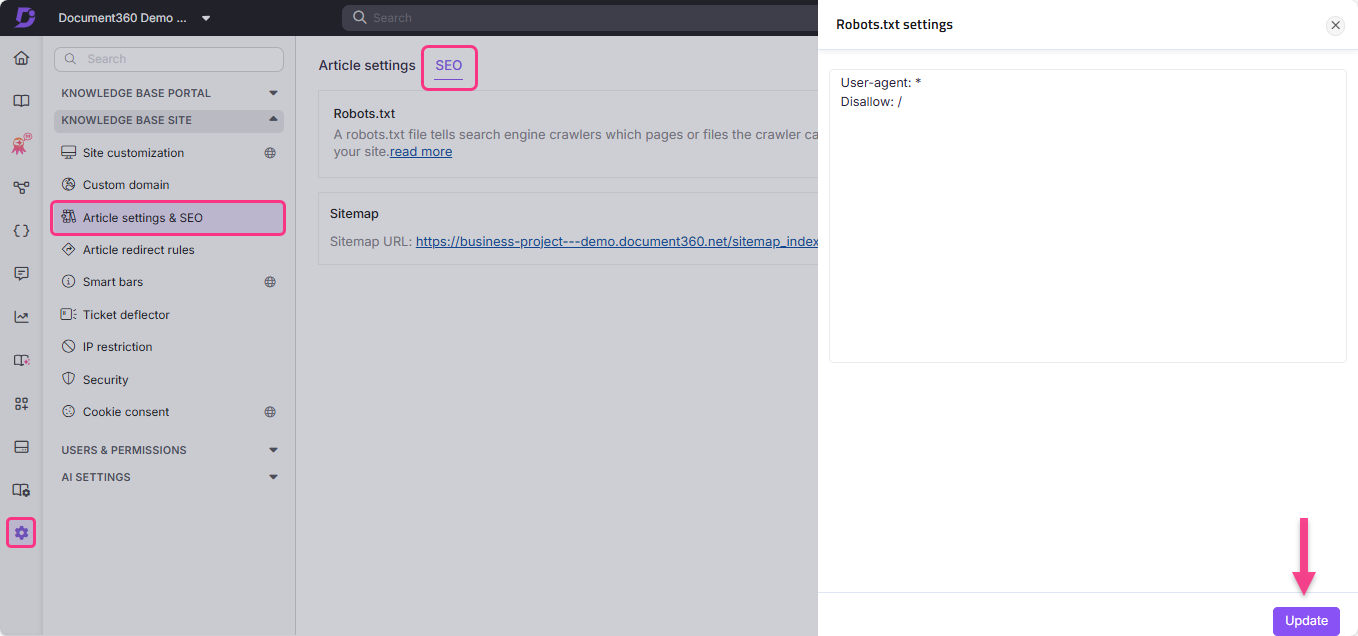

Om Robots.txt te raadplegen in Document360:

Ga naar Instellingen () in de linker navigatiebalk

Ga naar de Knowledge Base-site > Artikelinstellingen & SEO > SEO-tabblad .

Zoek Robots.txt en klik op Bewerken.

Het Robots.txt instellingenpaneel verschijnt.

Typ de gewenste regels in.

Klik op Update.

Gebruiksgevallen van Robots.txt

Een Robots.txt bestand kan een map, bestand (zoals een PDF) of specifieke bestandsextensies blokkeren zodat ze niet worden gecrawld.

Je kunt ook de crawlsnelheid van bots vertragen door crawl-delay toe te voegen aan je Robots.txt bestand. Dit is handig wanneer je site veel verkeer heeft.

User-agent: *

Crawl-delay: 10De crawler beperken via beheerdersdata

User-agent: *

Disallow: /admin/

Sitemap: https://example.com/sitemap.xml

User-agent: * - Specificeert dat elke bot door de site kan crawlen.Disallow: /admin/: - Beperkt de crawler toegang tot beheerdersgegevens.Sitemap: https://example.com/sitemap.xml - Biedt toegang aan bots om de sitemap te crawlen. Dit maakt het crawlen eenvoudiger omdat de sitemap alle URL's van de site bevat.

Een specifieke zoekmachine beperken in crawling

User-agent: Bingbot

Disallow: /

Het bovenstaande Robots.txt bestand is zo gedefinieerd dat Bingbot wordt verboden.

User-agent: Bingbot - Specificeert de crawler van de Bing-zoekmachine.Disallow: / - Beperkt Bingbot om de site te crawlen.

Best Practices

Voeg links toe naar de belangrijkste pagina's.

Blokkeer links naar pagina's die geen waarde bieden.

Voeg de locatie van de sitemap toe aan het Robots.txt-bestand .

Een Robots.txt bestand kan niet twee keer worden toegevoegd. Bekijk alstublieft de basisrichtlijnen uit de Google Search Central-documentatie voor meer informatie.

OPMERKING

Een webcrawler, ook wel een Spider of Spiderbot genoemd, is een programma of script dat automatisch door het web navigeert en informatie verzamelt over verschillende websites. Zoekmachines zoals Google, Bing en Yandex gebruiken crawlers om de informatie van een site op hun servers te repliceren.

Crawlers openen nieuwe tabbladen en scrollen door websiteinhoud, net als een gebruiker die een webpagina bekijkt. Daarnaast verzamelen crawlers gegevens of metadata van de website en andere entiteiten (zoals links op een pagina, kapotte links, sitemaps en HTML-code) en sturen deze naar de servers van hun respectievelijke zoekmachine. Zoekmachines gebruiken deze geregistreerde informatie om zoekresultaten effectief te indexeren.

Veelgestelde vragen

Hoe verwijder ik mijn Document360-project uit de Google-zoekindex?

Om het hele project uit te sluiten van de Google-zoekindex:

Navigeer naar Instellingen () in de linker navigatiebalk in het Knowledge base portal.

Ga in het linker navigatiepaneel naar de Knowledge Base-site > Artikelinstellingen & SEO > SEO-tabblad .

Ga naar het tabblad SEO en klik op Bewerken in de

Robots.txt.Plak de volgende code:

User-Agent: Googlebot

Disallow: Klik op Update.

Hoe voorkom ik dat tagpagina's door zoekmachines worden geïndexeerd?

Om de tagpagina's uit de zoekmachines te sluiten:

Ga naar Instellingen () in de linker navigatiebalk.

Ga naar de Knowledge Base-site > Artikelinstellingen & SEO > SEO-tabblad .

Klik op Bewerken in de

Robots.txt.Plak de volgende code:

User-agent: *

Disallow: /docs/en/tags/Klik op Update.